- Blog

- / August 29, 2019

Restoring Balance between the “M” and “E” in International Development Practice

International development practice has evolved dramatically over the past 25 years. Calls for improved accountability and transparency sometimes in response to scandal, always in response to the U.S. Congress, have led to a focus on tracking activities. At the same time, donor agencies and philanthropists have emphasized the role of data-driven, independent assessments to understand specific activity impacts to better inform future programming.

Monitoring and evaluation, or better known as M&E, have been grouped together. However, for a variety of reasons, the “E” has received more attention within the international development community and the general public. For instance, evaluation methods have been taught as semester-long courses or comprise entire graduate degrees, while monitoring approaches might get a few slides as part of a broader lecture.

But ask any evaluator about key lessons learned across programs, and they will often call for better monitoring to track progress. The methods and goals of evaluation are often clear: to understand development outcomes and impacts. Monitoring has suffered from language imprecision: is it performance measurement, performance management, indicator tracking, or just ho-hum everyday activity management and oversight?

But monitoring is currently having “a moment.” As data collection costs have steadily dropped and data systems have improved, implementing partners and donor agencies have found new ways to observe activities as they happen and adjust accordingly. Recognizing the need for timely analysis, many of the leaders in complex evaluation methods have begun shifting their focus on how to better track and improve activities closer to real-time.

Across the sector, there is a broad understanding that monitoring can be more than an accountability tool (although it is still very much that). In addition to tracking activities across high-level indicators, practitioners recognize that sophisticated monitoring can be used to learn not just what is happening within a program, but how and why. With smart monitoring approaches, critical changes can be made as events unfold without waiting for a final program evaluation or a knock on the door from the Inspector General to identify lessons learned or good practices.

Late last year, we gathered a group of MSI staff to talk about international development trends. Monitoring was the clear favorite.

Yet, despite the trends outlined above, there wasn’t a forum to just talk about monitoring. Sure, there are conferences, but we didn’t want the formal paper presentation version of what our peers are experiencing. A few meetings, many emails, and several months later, we helped organize a half-day event, “New Frontiers in International Development Monitoring,” dedicated to the practice of monitoring. By bringing donors, practitioners, academics, and non-profit leaders to our office, we sought to convene the people thinking about how to restore the balance between the “M” and “E.”

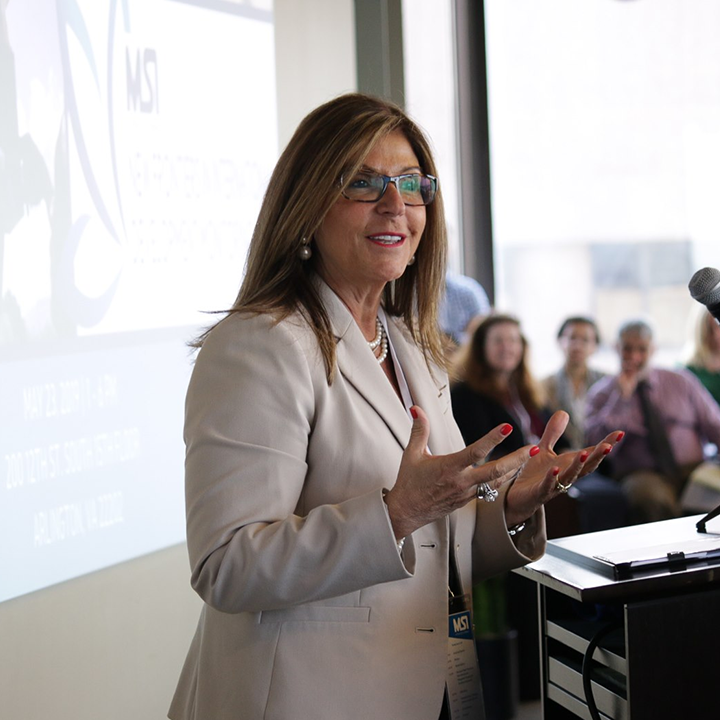

Ann Calvaresi Barr, Inspector General for USAID, the U.S. African Development Foundation, the Inter-American Foundation, the Millennium Challenge Corporation, and the Overseas Private Investment Corporation.

Ann Calvaresi Barr, Inspector General for USAID and several other agencies, highlighted the linkage and shared purpose behind accountability and rigorous M&E. In response to program lapses, the IG’s office now engages more with performance-based audits on top of traditional financial audits. She also outlined the top management challenges for FY2019. The challenges are primarily related to a lack of rigor in M&E, lack of access to do proper site visits, lack of documentation, and weak processes and planning. She and her office found that baseline data to determine effectiveness was consistently missing from activities. To all of these challenges, she noted that M&E remains “absolutely critical.”

She asked the audience to think critically about attribution of programming obstacles and their connection to M&E. To determine not just what challenges exist, but why and how they can be mitigated. She ended with a call to find ways to strengthen monitoring. We agree.

At MSI, we believe that monitoring systems need to be strengthened to incorporate required indicators, but also to actually capture actionable data that meaningfully improves service delivery. Too often monitoring becomes an exercise to help write a quarterly report rather than a useful tool for improving impact.

Over the coming weeks, we will highlight some of the other ideas raised at our event. We hope to connect development practitioners and push the conversation around monitoring forward.